One weird consequence of being more honest and direct that the average person in your culture is that people accuse you of dishonesty. It’s like “you were being unreasonable in not telling me the usual lies that I was expecting”.

Archive for the ‘Psychology’ Category

Dishonest Honesty

Tuesday, February 23rd, 2021Autistics and Theory of Mind

Thursday, January 7th, 2021Autistics are usually characterised as having a weak “theory of mind”. But when it comes to writing instructions and guidance I’ve found that autistics are much much better at being able to imagine themselves into the position of the target audience, think in a careful way about what needs to be said, diagnose what assumptions are missing, and work out how set things out in a step-by step way.

By contrast, neurotypical people write guidance that is full of missed assumptions and absent steps, and then blame the target audience for being thick or ignorant when they fail to follow the shoddily written guidance.

Why is Funny Funny?

Monday, November 16th, 2020Occasionally, I hear the opinion that topical TV panel shows such as Have I Got News for You and Mock the Week are “scripted”. Clearly, this is meant pejoratively, not merely descriptively. A scripted programme would not presenting itself to us honestly.

I don’t believe this (I have seen a couple of recordings of similar shows, and there isn’t any evidence of word-by-word scripting to my eye), but equally they aren’t simply a handful of people going into a studio for half-an-hour and chatting off the top of their head. My best guess for what is happening is a mixture of genuinely off-the-cuff chat, lines prepared in advance by the performers themselves, lines suggested by programme associates, material workshopped briefly before the performance, and some pre-agreed topics so that performers can work in material that they use in their live performances. All this, of course, topped by the fact that a lot of material is recorded, and the final programme is a selective edit of this material.

But, if it were to be scripted from end-to-end, and the performers essentially actors reading off an autocue, why would that be a problem? Like Pierre Menard’s version of Don Quixote, we wouldn’t know the difference. Why would knowledge that these programmes were scripted actually make them less funny? That is, that knowledge would make us laugh less at them—this isn’t just some contextual information, where we would still find it just as funny, but feel slightly cheated that it wasn’t as spontaneous as we are led to believe. We would, I would imagine, actually find it less funny.

There’s something about the human connection here. Even though we don’t know the performers personally, there is still some idea of it being “contextually funny”. Perhaps in some odd way it is “funny enough” to be funny if we believe it to be spontaneous, but not funny enough if we believe it to be scripted. Perhaps we are admiring the skill of being able to come up with the lines “on the fly”—but admiration doesn’t usually cash out in laughter. Somehow, it seems to do with the human connection that we have with these people. We find it genuinely funny because of the context.

I’ve often wondered why I can’t find other country’s political satire funny. I can work out the wordplay in Le Canard Enchaîné, but I don’t chuckle at it. I might admire it, but the subjects of the satire are just too distant; perhaps I don’t have a stake in the subjects in the same way that I do in the people that I read about in Private Eye.

When I used to lecture on the Computational Creativity module at Kent, I would talk about the Joking Computer system, an NLP system that could generate competent puns such as “What do you get if you cross a frog with a street? A main toad.”. I used to say that we would find that joke funny—genuinely funny—if it was told to us by a six-year-old child, say your younger brother or sister, even though it isn’t a hilarious joke. Similarly, perhaps, we might give the computer some leeway—it isn’t going to produce an amazingly funny joke, but it is funny for a computer. But, this argument always felt a bit flat. Perhaps it is the human connection—we don’t care that the (soul-less) computer has “managed” to make a joke, we lack that human connection.

My drama teacher at school used to say about the performances that we took part in that he wanted people to say that they had seen a “good play”, not a “good school play”. There is something in that. Perhaps, the same is true for computational creativity. It needs to be “creative enough” to be essentially acontextual before we start to find it genuinely creative.

On Bus Drivers and Theorising

Thursday, February 7th, 2019Why are bus drivers frequently almost aggressively literal? I get a bus from campus to my home most days (about a 2 kilometre journey), and there are two routes. Route 1 goes about every five minutes from campus, takes a fairly direct route into town, and stops at a stop about 100 metres from the West Station before turning off and going to the bus station. Route 2 goes about every half hour, takes a convoluted route through campus before passing the infrequently-used West Station bus-stop, then goes on to the bus station.

Most weeks—it has happened twice this week—someone gets on a route 1 stop, asks for a “ticket to the West Station”, and is told “this bus doesn’t go there”. About half the time they then get off, about half the time they manage to weasel out the information that the bus goes near-as-dammit there. I appreciate that the driver’s answer is literally true—there is a “West Station” stop and route 1 buses don’t stop there. But, surely the reasonable answer isn’t a bluff “the bus doesn’t go there” but instead to say “the bus stops about five minutes walk away, is that okay?”. Why are they—in what seems to me to be a kind of flippant, almost aggressive way—not doing that?

I realised a while ago that I have a tendency towards theorising. When I get information, I fit it into some—sometimes mistaken—framework of understanding. I used to think that everyone did this but plenty of people don’t. When I hear “A ticket to the West Station, please” I don’t instantly think “can’t be done” but I think “this person wants to go to the West Station; this bus doesn’t go there, but the alternative is to wait around 15 minutes on average, then take the long route around the campus; but, if they get on this bus, it’ll go now directly to a point about five minutes from where they want to get to, so they should get this one.” It is weird to think that lots of people just don’t theorise in that way much at all. And I thought I was the non-neurotypical one!

Learning what is Unnecessary

Friday, December 28th, 2018Learning which steps in a process are unnecessary is one of the hardest things to learn. Steps that are unnecessary yet harmless can easily be worked into a routine, and because they cause no problems apart from the waste of time, don’t readily appear as problems.

An example. A few years ago a (not very technical) colleague was demonstrating something to me on their computer at work. At one point, I asked them to google something, and they opened the web browser, typed the URL of the University home page into the browser, went to that page, then typed the Google URL into the browser, went the Google home page, and then typed their query. This was not at trivial time cost; they were a hunt-and-peck typist who took a good 20-30 seconds to type each URL.

Why did they do the unnecessary step of going to the University home page first? Principally because when they had first seen someone use Google, that person had been at the University home page, and then gone to the Google page; they interpreted being at the University home page as some kind of precondition for going to Google. Moreover, it was harmless—it didn’t stop them from doing what they set out to do, and so it wasn’t flagged up to them that it was a problem. Indeed, they had built a vague mental model of what they were doing—by going to the University home page, they were somehow “logging on”, or “telling Google that this was a search from our University”. It was only on demonstrating it to me that it became clear that it was redundant, because I asked why they were doing it.

Another example. When I first learned C++, I put semicolons after the brackets at the end of each block, after the curly bracket. Again, this is harmless: all it does is to insert some null statements into the code, which I assume the compiler strips out at optimisation. Again, I had a decent mental model for this: a vague notion of “you put semicolons at the end of meaningful units to mark the end”. It was only when I started to look at other people’s code in detail that I realised that this was unnecessary.

Learning these is hard, and usually requires us to either look carefully at external examples and compare them to our behaviour, or for a more experienced person to point them out to us. In many cases it isn’t all that important; all you lose is a bit of time. But, sometimes it can mark you out as a rube, with worse consequences than wasting a few seconds of time; an error like this can cause people to think “if they don’t know something as simple as that, then what else don’t they know?”.

Growth Mindset

Monday, August 27th, 2018In B&Q yesterday there were two parents and a child (around 5-6 years old) pushing a trolley out to their car. The child was insistently declaring an interest in helping to move the large boxes of tiles from the trolley to the car; the father insisting each time that it was pointless, that it would take two adults to move it, and that there wasn’t any point in helping.

One thing that helped me to develop a “growth mindset”—the view that skills and intelligence are largely not fixed or innate but the result of the right kind of study and development—was that my parents found lots of ways to involve me, at a level appropriate to my knowledge, skills, and development, in so many areas of life. I have no idea whether this was a deliberate strategy or that they just fell into it, but it was very helpful in instilling a positive view of the value of productive work.

A side note: I have often wondered if being a (to a first approximation) only child helped with my learning a wide range of skills, in particular not having a gender-sterotyped pattern of skills. Because I was the only child around, I would be co-opted into helping with a lot of things, whether cooking or washing, car-repair or plumbing. Perhaps in a larger family with a mixture of genders in the children, the girls might go off to help with “women’s stuff” from female relatives, whilst the boys do “men’s stuff” with males.

Languages and the Uncanny Valley

Tuesday, September 5th, 2017A long time ago, as a wet-behind-the-ears English person coming to Scotland for the first time, I was intrigued/surprised/amused to see a copy of The New Testament in Scots in a bookshop (the old James Thin on South Bridge, now a branch of Blackwells).

I was vaguely aware that there was a Gaelic language, which not many people used, and had a basic knowledge that there was a Scots accent and vocabulary, albeit largely gleaned from watching Russ Abbot’s “see u Jimmy” character on TV:

…but the idea of treating this as a language was alien to me. I’ve developed by knowledge of this world over the years, and can appreciate the literary qualities of it, particularly through the thoughtful work by Hugh MacDiarmid. But, what explains my initial sense that this sort of thing is a bit ludicrous, a little trying-too-hard:

…a little too close to the clearly humorous (though perhaps not evangelically purposeless) Ee by Gum, Lord!: The Gospels in Broad Yorkshire.

Why did I, 25 years ago, think that its description as “a translation” was odd? I wouldn’t have regarded a translation into French or Japanese or Guarani strange—so, why Scots? This touches, I suppose, on the language vs. dialect debate; when does a dialect become a separate language. This seems to be an ill-defined question; there is clearly a continuum, and whilst groups of language-users cluster at certain points thereon, this doesn’t happen cleanly enough to be a series of isolated clumps.

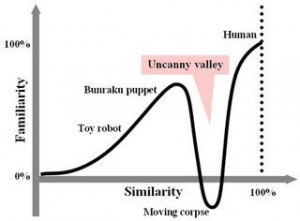

One idea that might help to explain this is the uncanny valley; here’s one of its inhabitants, a rather realistic looking humanoid robot:

This sort of thing—not far of being human, but not close enough to “pass”—is said to be uncanny, and this is backed up by a number of empirical studies. People are freaked out by this, much more than something really realistic or something more cartoony and obviously unrealistic. There is a point on the similarity scale, close to full realism, where suddenly people’s familiarity and comfort with the thing rockets downward:

I think the same is true for languages. Sufficiently far away—English to French, say, or Sanskrit—and the language is dissimilar, clearly different. Close enough—Nottinghamshire to Yorkshire, say—and the similarities are unremarkable. But the distance from RP English to Scots sits just at the right distance of unfamiliarity; like enough to be familiar, far enough away to seem different. Interestingly, the reaction is one of amusement rather than unsettledness; but, the idea of an emotional reaction being triggered by something close to but not really close to something is still there.

Crap

Monday, August 14th, 2017I have a colleague who is a non-native speaker of English, but who speaks basically fluent English. One gotcha is that he refers to “scrap paper” as “crap paper”—which, when you think about it, isn’t too unreasonable. It’s not unreasonable that “crap paper” could be a commonly-used term for paper that doesn’t have any focused use. I’ve been procrastinating for years about whether to mention this infelicity; it is probably too late now.

Bigger lesson—it is hard, when learning a language, to hoover up that final 0.01% of erroneous knowledge.

Combinations (1)

Monday, January 23rd, 2017One of the points where mathematics and day-to-day intuitions jar is in estimating numbers of combinations and similar combinatorial problems. I’ve just made a booking on Eurostar, and my confirmation code is a 6-letter code. Surely, my intuitive brain says, this isn’t enough; all of those people going on all of those journeys on those really long trains, day-in, day-out. Yet there are a vast number of possibilities; with one letter of the 26 letter alphabet for each of the 6 letters in the code, there are 26^6=308,915,776 possible combinations. Given that there are 10 million Eurostar passengers each year, this is enough to allocate unique codes for passengers for around thirty years. It then makes you wonder why some codes are so long, like the 90-digit MATLAB registration code that I had to type in by hand a couple of years ago.

Mental Blocks

Thursday, October 27th, 2016We all seem to have tiny little mental blocks, micro-aphasias, things that we, try as dammit, cannot learn. My mother couldn’t remember the word “volcano”—she was a perfectly fluent native speaker of English, with no other language difficulties, but whenever she came to that word it was always “one of those mountain-things with smoke coming out of the top” or similar, followed by several seconds until the word came to her. I have a block on the ideas of “horizontal” and “vertical”. Whenever I read these, I feel my mind blurring; I know which two concepts they map on to, but for a second or two (which feels like an eternity in the usual flow of thought) I cannot fluently map the words onto the concepts. Usually I break the fog by making a gesture with my fingers—somehow, this change of mode (moving my fingers from left to right strongly associates with the word “horizontal”) dispels the confusion, it must trigger a different part of my memory associations. Quite where these odd little blocks come from—and, why we can’t just learn them away—is fascinating.

Indirect Remembering

Tuesday, August 23rd, 2016Here’s an interesting phenomenon about memory. I sometimes remember things in an indirect way, that is, rather than remembering something directly, I remember how it deviates from the default. Two examples:

- On my father’s old car, I remembered how to open the window as “push the switch in the opposite way to what seems like the right direction.”

- On my computer, I remember how to find things about PhD vivas as “really these ought to be classified under research, but there’s already a directory called ‘external examining’ under teaching, so go in there and look for the directory called extExams and then the sub-directory called PhD“.

It makes me wonder what other things that I do have a similar convoluted story in my memory, but where the process just all happens pre-consciously.

Dilemma (1)

Wednesday, July 16th, 2014Here’s an interesting situation. Several times a year, I take part in university open days, where I sit behind a desk answering questions about courses from prospective students. Typically, at the undergraduate open days, the punters consist of a shy 16/17 year old and one or two rather more confident parents.

Here’s my problem. I don’t want to make the assumption that the older person is the accompanying parent and the younger person the prospective student. I’d be mortified if I made that assumption on the day that a parent, bringing their child with them for moral support or lack of childcare, was the prospective student. But, this happens so rarely that the parents and student just sit down assuming that I am going to read the situation as the obvious stereotype.

How should I react in this situation? Asking “which of you is the prospective student?” is treated as a joke or, more troublingly, as evidence of density or weirdness on my behalf. But I still feel uncomfortable making the assumption. I’ve taken to starting with a broad, noncommittal statement like “So, what can I do for you?” or “What’s the background here then?” and hoping that it will become obvious. That isn’t too bad, but there might be a better way.

More abstractly: we try to avoid stereotypes and making assumptions about people and situations based on initial appearance. But, what do you do when the stereotype is so commonplacely true that even the people being stereotypical are expecting that you will react using the stereotype as context?

Forms of Embarrassment (3)

Tuesday, February 18th, 2014Get to the bus stop to find that someone is waiting at the wrong end of the stop. Stand at the correct end of the stop, a few other people come to the stop. The bus arrives, both of us want to get on the same bus, I let them get on first, get a slightly strange look from them, like I’ve broken some taboo about having paid enough attention to someone in a public space to recognise them a few minutes later. But, I didn’t want to barge on in front of them in case they felt I was being boorish by not recognising that they had got to the stop before me.

Rubes, Tyros and Uptight Noobs

Saturday, July 14th, 2012One difficulty that I have when place in a new environment, e.g., travelling to a new country or working in a new place, is adapting to day-to-day norms. Travel books are full of advice of the “always insist on taxi drivers using the meter” kind, but I always find it difficult when the reaction of the local is a slightly shocked-bemused look and a comment like “really?”. One problem is that the travel books tend to be quite stiff and risk-averse, for good reason. We don’t want to be taken as a rube or tyro, and so we go along with “what seems normal” in a particular situation, rather than being the stiff outsider who seems to be the first person in a century to insist on rules being followed to the letter.

I wonder if this sort of thing happens at all levels of engagement with novelty. We sometimes here of a senior politician who is railroaded along into carrying out some corrupt or biased action. The common response to this is to say “come on, you were the Prime Minister, how could you have been so ignorant/allowed yourself to be taken along for a ride”? But, I don’t think it is as easy as that; I can readily imagine a situation in which you are told “actually, minister, we don’t really do things like that” by some adviser or civil servant, and exactly the same kind of psychology as above kicks in. However experienced a politician you might be, being Prime Minister (or whatever) is still new, for quite a while, and I can imagine that the pressure not to look like some uptight noob is very influential.

Would you rather be a Jerk or an Asshole?

Wednesday, February 29th, 2012I came across a pair of cod-definitions a while ago (I can’t find the reference), along the following lines:

- A jerk is someone who, because their attention is elsewhere or they don’t know the consequences of their actions, messes up things for other people

- An asshole is someone who knows that their actions will mess things up for other people but goes ahead anyway

Would you rather be seen as a jerk or an asshole? Probably neither! But, if you screw up in public—say by tripping someone or bumping into someone in public—you would rather that this be seen as an accident rather than a result of you (say) thinking that you need to get somewhere quickly as so you are going to push your way through the crowd regardless of whether you trip or bump other people.

How do we try to achieve this? We usually do this by following up an apology with an explanation, whether a real one or a made-up one: “sorry, just got new glasses yesterday and I’m still adjusting”. By giving this explanation, we are trying to change the perception of the person we offended by moving from the (seeming default) asshole category to a position that we might called “justified jerk”, where we messed up because we weren’t sensitive enough to realise that our situation (say, the new glasses) required more care, but at least we have some reason for it. We are essentially sending out an appeal for empathy.

Usually, though, this doesn’t work. The explanation gets taken as an “excuse” and just harrumphed off. I wonder why? Perhaps this is a variant on the Dunning-Kruger phenomenon, where people who are bad at some task overestimate their ability at the task. I imagine that when we ask for empathy in the situation, the other person thinks “if I’d just got new glasses, I’d have been more careful and slowed down my pace of walking etc.”. As a result, we get bumped back into asshole territory: because we have tried to give an explanation, we have shown some awareness of the situation, and therefore given evidence that we had some understanding that we could have used to anticipate the problem, so the problem is because of our arrogant disregard rather than because of casual error.

Perhaps just an apology is better? But then, we don’t move they person away from their default position that we are doing this for assholey reasons. Perhaps there is no way out of this bind.

Propositional and Deduced Knowledge and Memory Conditions

Tuesday, December 27th, 2011Here is something that I have observed, which has interesting implications for memory problems like Alzheimer’s disease. My father has some memory problems, and what is interesting is that he can recall some facts learned a long time ago, but some of the deductions from that knowledge aren’t readily recalled. For example, he can remember each of his two marriages, but when asked “how many times were you married?” he doesn’t know.

Here is my hypothesis about what is happening. He has a clear memory of the two marriages as specific sets of events, but has not “bothered” to learn the fact “I have been married twice” as a specific propositional fact, as this can be deduced immediately from the memories of those two specific facts. However, as he has lost speed of access to specific memories, the ability to make that link from particular pieces of knowledge to a new “deduced” piece of knowledge has declined, and so he has trouble accessing the pieces of knowledge that were never stored as explicit propositional knowledge but which were always present as immediate deductions from readily recalled facts.

Do we not bother learning some things because we can instantly deduce them from other knowledge, and then if we have memory problems we actually end up with this being a problem?

Self-reinforcing Criticism

Tuesday, October 25th, 2011There is an interesting rhetorical move that I notice increasingly, which we might refer to as self-reinforcing criticism. An example of this is given in one of Edward De Bono’s books: a caricature of a Freudian analyst argues that some negative trait that someone has is due to their repressing some aspect of their personality. The person being criticized has very little in the way of response. Either they agree, or they disagree. If they disagree then that can itself be used as evidence of even deeper repression!

A common use of this is in planning processes in organisations. A complex proposal will be presented, which is roundly criticized for a number of reasons. However, rather than taking on the criticisms, the person presenting the argument counters with the argument that the critics are just “afraid of change”.

We need a term to “call out” this kind of specious argument. I have experimented by called out the emotional aspects of it: “why are you in a position to know how I am feeling?”. But this isn’t ideal. We need a term of art to describe this, and then to create a pejorative sense to that term. Perhaps a term for this already exists in rhetoric somewhere?

Relatedly, there is a phenomenon where a complex proposal will be presented and, if it is attacked, the proposer will say “well, what do you suggest instead?”. This is difficult to respond to, as the proposer is in the position where they have had days, weeks or months to prepare their proposal, whereas the off-footed opponent has a matter of seconds or minutes. I wonder if we should be working harder to make multi-alternative proposals to be both normal (so that proposals with only one alternative are seen as weak) and acceptable (so that presenting proposals with multiple alternatives are not seen as being weak and indecisive).

Muddling through Morality

Tuesday, October 25th, 2011Thinking about the basis for moral action as a result of Stuart Sutherland’s interesting lecture earlier this evening on “Hume and Civil Society”. Hume skeptically examines various putative bases for moral action, and finds many of them wanting—religion, social norms, rational thought.

I was wondering whether, in practice, there is a single “base theory” like this, though. Perhaps there are a number of different bases, and the consequences of these all largely coincide. Groups might seem to be acting in a coherent moral fashion, but each individual’s morality might have a different basis; or, more likely, combination of bases. Some might be driven by emotional repugnance, some by rationally thinking through the consequences of their action, some by social norms, some by fear of (spiritual or temporal) authority, most by some mixture of them all. In the end they all do more-or-less the same thing. This has a flavour of the “swiss cheese” theory of risk: most of the time at least one of our moral bases kick in to prevent us acting immorally, and it is only when all of the bases are absent, or else miscued in a particular context, that morality fails.

Forms of Embarrassment (1)

Tuesday, October 25th, 2011A project to enumerate unusual forms of embarrassment (part 1).

One particular form of embarrassment that catches me by surprise is when people think that I have said something much more shocking/out of character than I actually had.

For example, a couple of years ago I was talking about hash tables and dictionary lookup in a lecture and used an example of animals and their names. One example pairing was (Panda, “Eats, shoots and leaves”). I was familiar with a version of the joke about a panda who eats a meal, shoots the waiter and then walks out of the restaurant; I was not familiar at the time with a variant in which the “shoots” refers to ejaculation. As I began to tell the joke, a few members of the audience (should I really be thinking of students as “audience”!) began to titter or look shocked that their lecturer was going to tell an off-colour joke. I found out after the lecture by talking to students what had caused this.

Of course, it is a shocking indictment of our society’s values that a joke about someone being murdered in cold blood is inoffensive, whereas the slightest sexual reference is shocking.

Moment Form and Dementia

Wednesday, March 9th, 2011Momentform is a description of a style of musical composition devised by Stockhausen and first used in his piece Kontakte. The key idea is that a piece is constructed so that each moment is appreciable in its own right; by contrast, most traditional musical forms are based around the idea of some kind of temporal structure such as narrative or development.

I wonder if there is some scope for this as the basis for a musical form that would be appreciated by people with memory problems and dementia. One of the features of many kinds of dementia is that patients find it difficult to form a coherent structure from what is happening in the world. Perhaps, rather than trying to force a narrative-based musical form on such a person, we should be inventing forms that are appropriate for them.